The digital landscape experienced a seismic paradigm shift in mid-March 2026.

Speaking at the BlackRock U.S. Infrastructure Summit in Washington, D.C., OpenAI CEO Sam Altman set out a vision that will fundamentally change our perception of artificial intelligence. He declared that AI will no longer be treated as a software subscription or a standalone tool. Instead, he said, the industry is rapidly converging on a model where intelligence is sold by the token, rendering it a fundamental utility.

“We see a future where intelligence is a utility like electricity or water,”

Altman stated, emphasizing that businesses and individuals will simply “buy it from us on a meter.“

At the epicentre of this seismic macroeconomic shift lies the concept of an intelligence as a utility. Rather than purchasing rigid software licences or maintaining expensive internal data science teams, modern enterprises are being encouraged to transition towards metered consumption of digital brainpower.

For decades, the technology industry has relied on the predictable model of planned obsolescence and costly hardware upgrades. However, by shifting the burden of complex cognitive processing to decentralised data centres, the financial barriers to advanced problem solving are significantly reduced. Intelligence utility is set to become the invisible, omnipresent infrastructure of the modern enterprise, silently optimising complex global supply chains, drafting intricate legal frameworks and directing fleets of autonomous vehicles. This impending reality means that an organisation’s cognitive capacity is limited only by its willingness to pay the monthly fee.

The Data Center Dilemma: Infrastructure for the Intelligence Utility

In this new AI-Metered World, data centers are no longer just storage facilities for cloud computing; they are the literal power plants of the twenty-first century. Just as the industrial revolution relied on coal and steam, the cognitive revolution relies on gigawatt-scale data facilities churning out raw intelligence. Companies will no longer need to hire vast teams of analysts for routine operations. Instead, they will tap into the grid, purchasing steady streams of background intelligence for day-to-day tasks, or authorizing massive, instantaneous bursts of compute to solve urgent, highly complex problems.

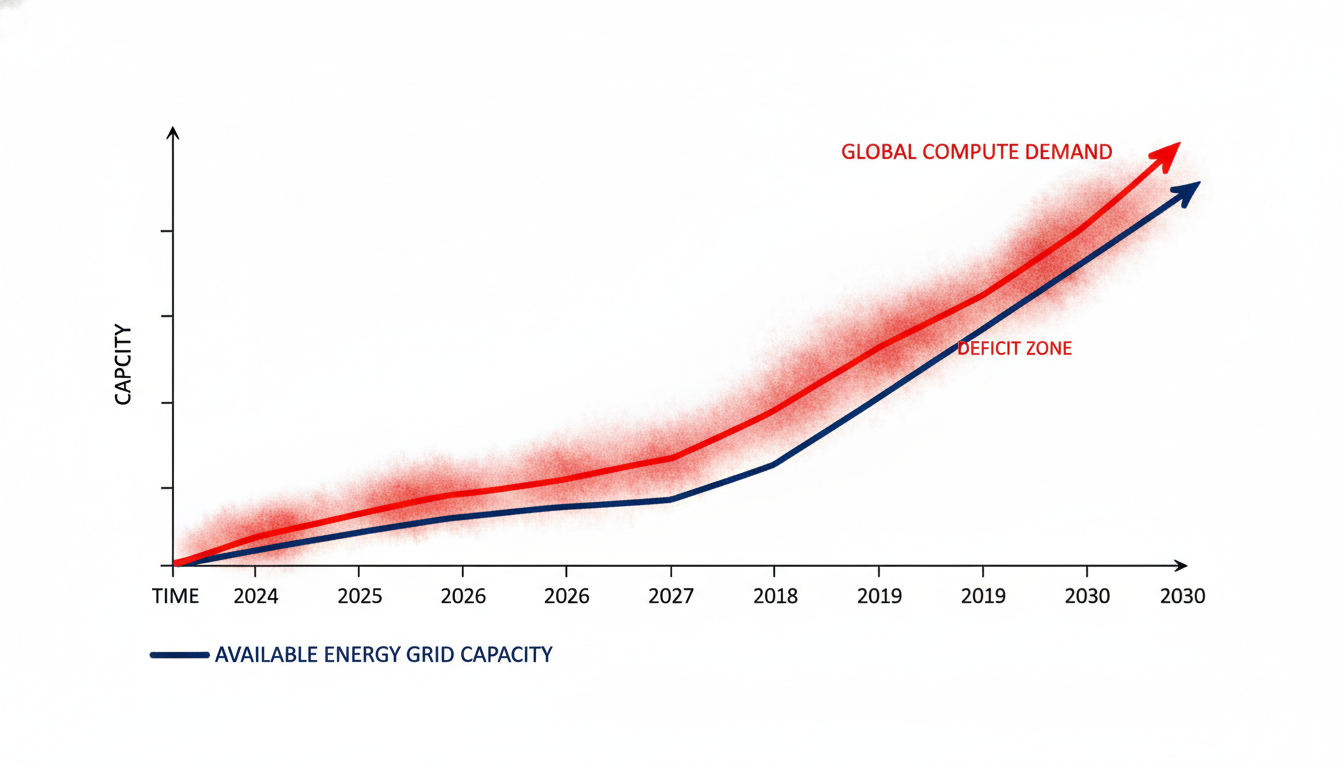

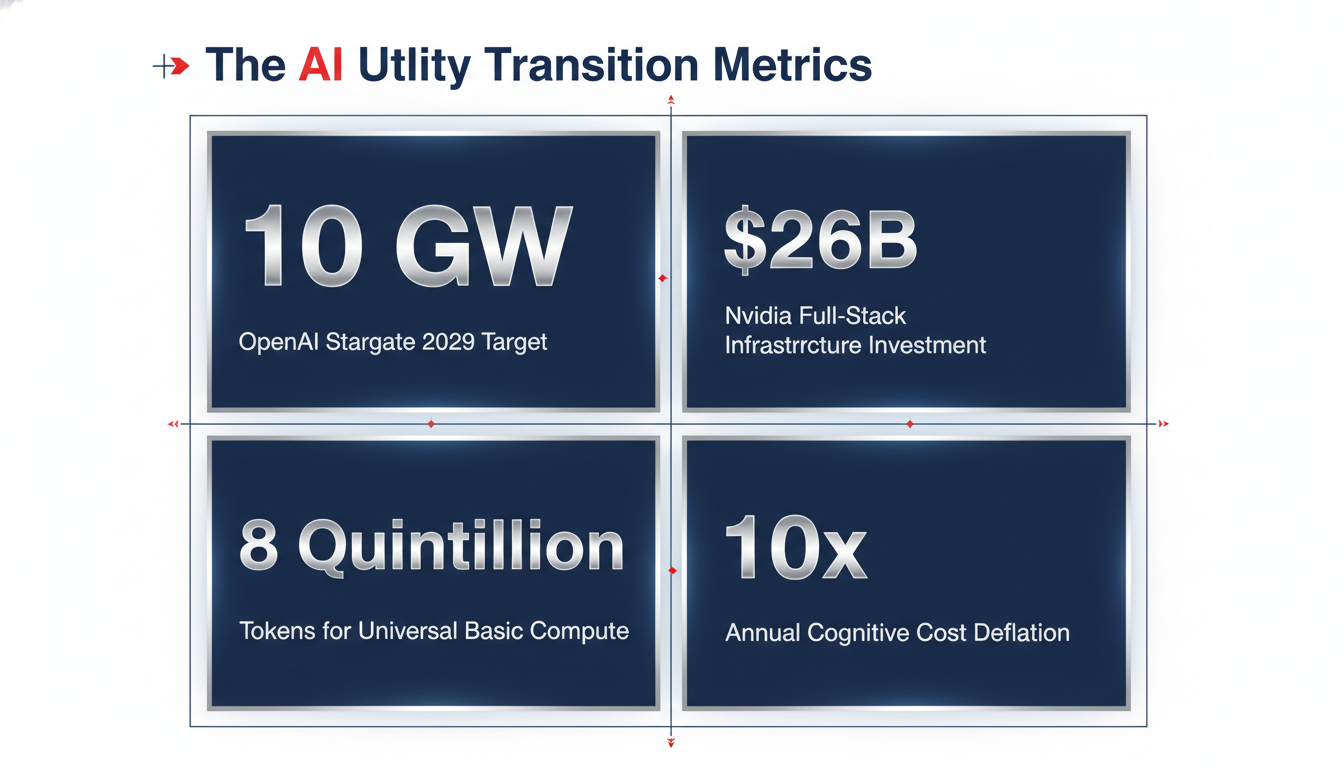

To understand the sheer scale of this transformation, one must look at the capital currently being deployed to build these modern cognitive power plants. The utility model of intelligence requires an ocean of supply to prevent bottlenecks. Recognizing this, an unprecedented infrastructure sprint is already underway. OpenAI, backed by a staggering $110 billion funding war chest, is actively pushing the Stargate project—a joint venture with Microsoft, SoftBank, and other sovereign funds aiming to build up to 10 gigawatts of AI data center capacity across the United States by 2029. To put that into perspective, ten gigawatts is roughly the equivalent output of ten large-scale nuclear reactors.

Simultaneously, Nvidia has signaled its evolution from a mere hardware vendor to a foundational utility provider. A recent Securities and Exchange Commission filing revealed Nvidia’s commitment to invest $26 billion over the next five years in a transformation to a full-stack AI lab. Nvidia is co-building AI data centers and deploying over five gigawatts of computing power, ensuring they control the entire cognitive supply chain from the underlying silicon to the top-layer open-source models.

These investments prove that the tech industry views AI not as a product, but as the foundational infrastructure of the future economy. The underlying assumption is that demand for synthetic intelligence is virtually limitless, provided the price per unit continues its historical deflationary trend, dropping by a factor of ten each year.

The Zero-Employee Unicorns

How exactly will businesses operate when intelligence becomes an on-demand utility?

The transition to an AI-Metered World will fundamentally restructure corporate operations. Today, a company’s intellectual capacity is bound by its headcount. If a pharmaceutical firm needs to analyze a million chemical compounds, it must hire a proportional number of researchers, bound by human limitations of speed, fatigue, and cost. In the utility model, intellectual capacity becomes highly elastic.

A corporation plugged into the cognitive grid will manage its AI usage much like it manages its electricity or bandwidth. For routine, day-to-day operations, companies will draw a low, continuous current of intelligence. This background processing will run autonomously, metered by the kilotoken, seamlessly maintaining the operational equilibrium of the enterprise.

However, the true revolutionary potential lies in the ability to purchase cognitive bursts. When faced with a sudden crisis—a zero-day cybersecurity vulnerability, a sudden supply chain collapse due to geopolitical conflict, or the need to rapidly synthesize years of clinical trial data—a company can authorize a massive, temporary spike in its intelligence draw.

Consequently, nimble start-ups will be empowered to develop and expand incredibly complex, intelligence-rich consumer products without the need to hire large, expensive teams of specialised software engineers or junior data scientists. The traditional barrier to entering and disrupting deeply entrenched legacy industries will be reduced to the simple cost of the API calls required to run the foundational models. According to economic historians, we will soon witness the rise of the first ‘zero-employee unicorns‘ — highly automated billion-dollar corporations managed by a single founder who leverages a metered grid of specialised AI agents to autonomously handle logistics, marketing, product development and customer service on a global scale.

A Bright Future

In conclusion, the soaring rhetoric emanating from the recent BlackRock Infrastructure Summit is not just typical executive arrogance; it is a clear-eyed, calculated articulation of the technology industry’s ultimate financial strategy. The transition from software as a localised product to intelligence as a universally metered utility is a fundamental and irreversible reorganising of global computing architecture. While it promises to democratise access to profound analytical capabilities that could change the world, it comes at the steep cost of deepening our collective societal dependence on fragile, immensely energy-intensive infrastructure controlled by a tiny number of corporate giants.

As digital meters begin spinning in data centres around the world, society must urgently confront the sobering fact that our collective human capacity for thought, creativity and problem-solving is becoming an increasingly rented corporate commodity. This commodity is forever subject to the ever-shifting terms of service dictated by the ultimate landlords of the digital age: the companies that own the data centres.