The era of the experimental AI pilot is over; the era of the gigawatt-scale AI factory has begun. In a move that reverberated through Wall Street and the data center industry alike this week, Vertiv (NYSE: VRT) finalized a transformative $4.6 billion liquidity overhaul on March 5, 2026. This financial maneuvering is not merely a balance sheet adjustment; it is a strategic war chest designed to conquer the rapidly escalating market for high-density liquid cooling. As high-performance computing (HPC) and generative AI workloads push rack densities beyond the physical limits of air cooling, the industry is witnessing a forced evolution—a phase shift where thermal management becomes the primary constraint on digital growth.

For the last decade, high-density liquid cooling was viewed as a specialized solution for supercomputers or niche crypto-mining operations. However, the convergence of Nvidia’s Blackwell and upcoming Rubin architectures with the insatiable appetite of hyperscalers has fundamentally altered the landscape. We are no longer discussing incremental efficiency gains; we are facing an existential infrastructure pivot. The events of the last seven days, anchored by Vertiv’s capitalization and echoed by strategic shifts at MWC 2026, confirm that liquid cooling is now the central pillar of next-generation data center design.

The Thermal Inflection Point

Projected rack power density vs. cooling technology viability (2023–2028)

Source: Market Intelligence & Thermal Engineering Forecasts

The Financialization of Thermal Physics

The headline figure—$4.6 billion—tells a story of industrial scaling. Vertiv’s decision to refinance and secure this liquidity is explicitly aimed at addressing a $15 billion backlog, dominated by hyperscale demands for thermal management. This is not speculative R&D; this is capital deployment for mass manufacturing. The market for high-density liquid cooling is transitioning from a bespoke engineering challenge to a supply chain logistics game. Hyperscalers like Microsoft, Google, and Meta are no longer testing liquid cooling; they are mandating it for their 2026-2027 builds.

Strategic KPI Executive Grid

Capital secured in March 2026 to accelerate manufacturing capacity for liquid cooling infrastructure, directly addressing the hyperscale order backlog.

Nvidia’s “Rubin” architecture roadmap suggests single-rack power consumption reaching 1 megawatt, mandating 100% direct-to-chip liquid cooling.

Vertiv’s reported surge in Q4 orders, driven almost exclusively by AI-related infrastructure needs, signaling a massive disconnect between supply and demand.

The MWC 2026 Validation

Beyond the financials, the technological validation of high-density liquid cooling was on full display at the Mobile World Congress (MWC) in Barcelona earlier this week. Huawei unveiled its “Large Temperature Difference Cooling System,” a solution specifically engineered to improve the thermal gradient efficiency in high-density environments. This innovation is critical because as coolant temperatures rise to support heat reuse and efficiency, maintaining the necessary temperature delta (ΔT) to cool the chip becomes increasingly difficult.

Simultaneously, HPE’s CEO Antonio Neri described liquid cooling as an “unfair advantage” for the company, citing their heritage in Cray supercomputing. This statement is profound. It suggests that knowledge of fluid dynamics and plumbing—skills once relegated to facilities management—is now a core competitive differentiator for IT hardware vendors. The integration of high-density liquid cooling is forcing a merger between IT and OT (Operational Technology). The server is no longer just a compute node; it is a thermo-hydraulic system.

Global Impact Matrix: Cooling Readiness

Regional analysis of high-density infrastructure adoption and grid constraints.

| Region | Adoption Velocity | Critical Constraint | Strategic Outlook |

|---|---|---|---|

| North America | HIGH | Grid Capacity & Power Availability | Massive retrofit of legacy sites; aggressive greenfield “AI Factory” builds. |

| EMEA | MEDIUM | Regulatory (Heat Reuse) & Water | Focus on closed-loop systems and district heating integration (EU Directive). |

| APAC (ex. China) | HIGH | Ambient Temperature & Space | Rapid leapfrogging to immersion cooling due to high humidity/heat environments. |

| China | VERY HIGH | Chip Supply Chain Isolation | State-mandated PUE targets driving domestic liquid cooling innovation (Huawei). |

Direct-to-Chip vs. Immersion: The Battle for Density

Within the domain of high-density liquid cooling, a technical schism is widening. The current market consensus, driven by Nvidia’s HGX and GB200 reference architectures, heavily favors Direct-to-Chip (DTC) single-phase cooling. This method uses cold plates mounted directly on the GPU and CPU, circulating fluid to a CDU. It offers a balance of extreme cooling performance and maintainability, allowing technicians to service racks without dealing with open baths of dielectric fluid.

However, news from the edge—specifically Dell’s launch of the PowerEdge XR9700 this week—reminds us that immersion cooling retains a strong value proposition for specific use cases. The XR9700 is a ruggedized, closed-loop liquid-cooled server designed for “unprotected outdoor environments.” This highlights the versatility of liquid cooling: it’s not just about handling the heat of a 100kW rack; it’s about hermetically sealing the hardware against dust, humidity, and vibration. While DTC wins in the hyperscale data center, immersion and closed-loop systems are carving out a critical niche at the edge.

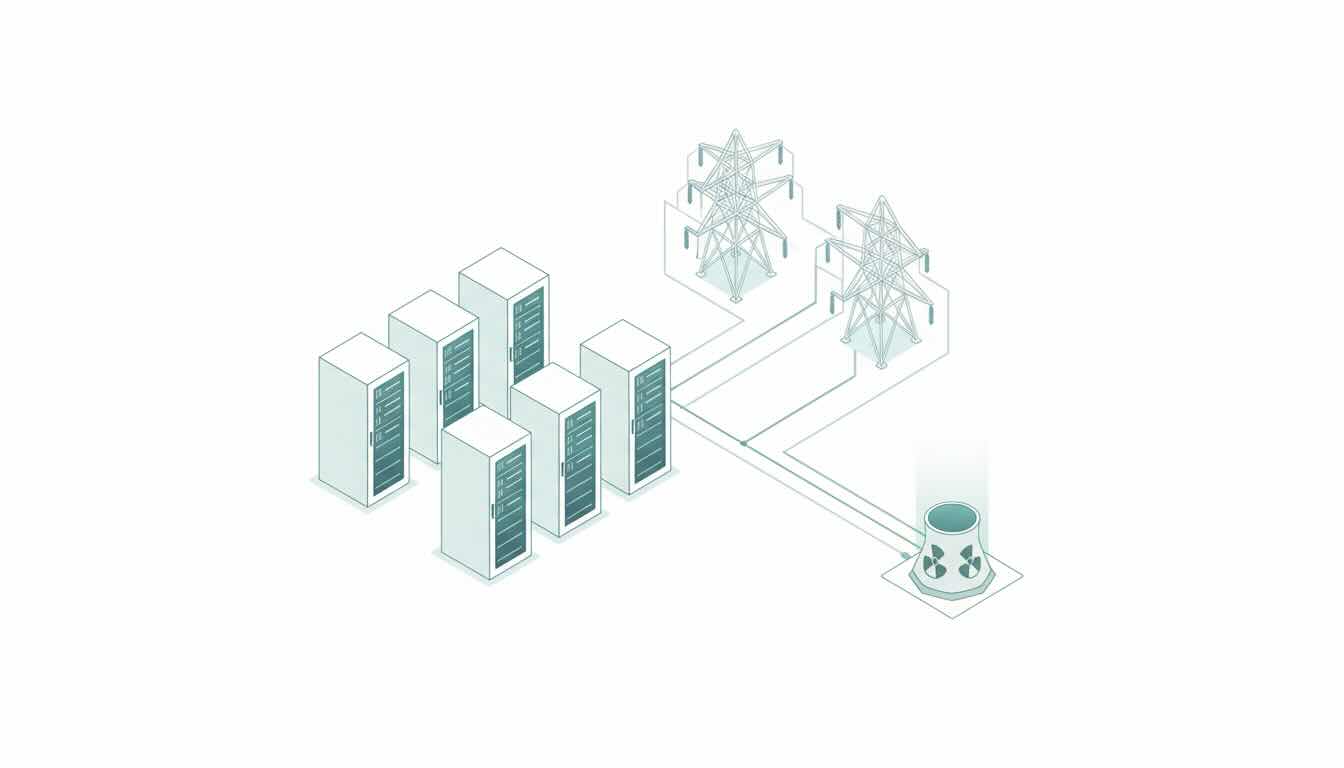

Strategic Flow: The Thermodynamics of an AI Factory

Heat Source (~1000W)

Direct Liquid Capture

Coolant Distribution Unit

Atmospheric Rejection

Energy Recovery

Process flow for a typical high-density liquid cooling deployment.

The Retrofit Challenge and Future Outlook

While the future is liquid, the present is a messy hybrid. The majority of the world’s data center capacity is in facilities designed for air cooling, with raised floors and CRAC units. The introduction of high-density liquid cooling into these environments presents massive engineering challenges. Floor loading capacities must be re-evaluated for heavy fluid-filled racks. Plumbing must be routed to the row, introducing the risk of leaks in server rooms that have historically been kept bone-dry.

Vertiv’s strategy, emboldened by its new liquidity, appears to be a two-pronged attack: dominate the greenfield “AI Factory” market with modular, integrated liquid cooling solutions, while offering “liquid-to-air” CDUs that allow legacy data centers to deploy high-density racks without retrofitting the entire facility’s water loop. This “hybrid” approach is the bridge to the future.

As we look toward the second half of 2026, the data points from this week—Vertiv’s financing, Huawei’s innovation, and the steady march of Nvidia’s roadmap—coalesce into a singular truth: High-density liquid cooling has graduated. It is no longer an optional upgrade; it is the fundamental prerequisite for the existence of modern AI infrastructure. The companies that master this thermal transition will define the physical layer of the intelligence age.