The history of the digital age is written not just in lines of code, but in the thermal dynamics of the facilities that house them. For decades, the data center industry operated in a state of obscure inefficiency, where uptime was the only metric that truly mattered. However, as the internet scaled from a curiosity to the backbone of the global economy, the energy footprint of these facilities became impossible to ignore. The journey to improve data center PUE (Power Usage Effectiveness) is not merely a technical manual on cooling systems; it is a narrative of architectural evolution, geographical strategy, and the relentless pursuit of thermodynamic perfection.

The Pre-Metric Era: Chaos in the Server Room

Before 2007, the concept of energy efficiency in data centers was largely anecdotal. Facilities were designed with a “belt and suspenders” approach, where massive Computer Room Air Conditioning (CRAC) units blasted frigid air indiscriminately across rows of servers. The prevailing engineering philosophy was defined by a fear of overheating, leading operators to keep facilities at meat-locker temperatures, often as low as 18°C (64°F). During this era, mixing hot exhaust air with cold supply air was common, creating thermodynamic inefficiencies that required exponentially more energy to overcome.

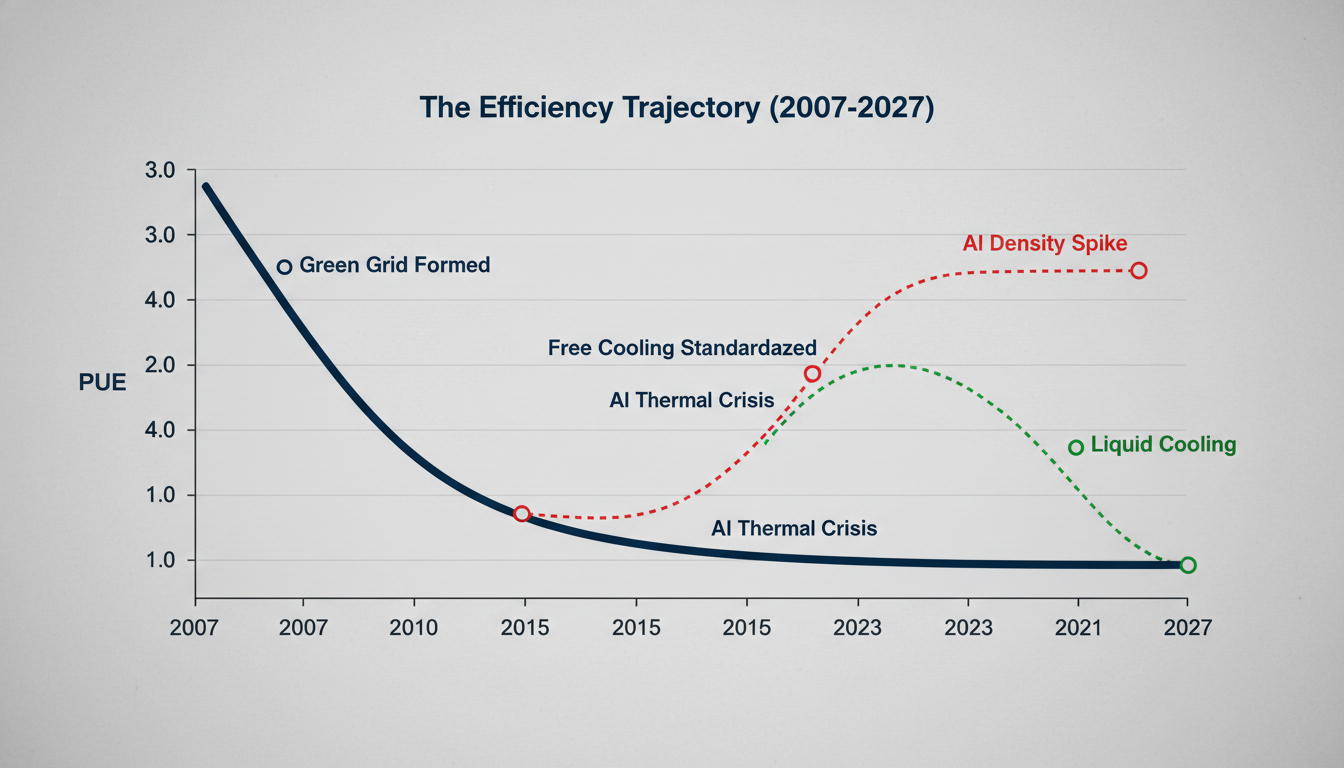

It was in this chaotic environment that a consortium of industry leaders, including AMD, APC, Dell, HP, IBM, Intel, Microsoft, and Sun Microsystems, formed The Green Grid. In 2007, they formalized the PUE metric—calculated as the ratio of total facility energy to IT equipment energy. This was the inflection point. For the first time, operators had a standardized ruler to measure their waste. Early audits were shocking: the industry average PUE was estimated to be around 2.5 or higher, meaning that for every watt of electricity used to power a server, another 1.5 watts were wasted on cooling, lighting, and power distribution losses.

The Efficiency Gap

Historical PUE improvements vs. Theoretical Limits

Phase I: The Low-Hanging Fruit (2008–2012)

Following the standardization of the metric, the initial rush to improve data center PUE focused on airflow management. This period saw the widespread adoption of hot and cold aisle containment. By simply arranging server racks so that intakes faced intakes and exhausts faced exhausts—and physically sealing these aisles with doors or plastic curtains—operators prevented the recycling of hot air. This seemingly low-tech intervention allowed facilities to raise the ambient temperature of the room without risking equipment failure.

Simultaneously, the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE) revised their thermal guidelines, expanding the recommended operating temperature envelopes. This was a critical regulatory shift. It gave facility managers permission to stop cooling server rooms to 18°C and let them drift up to 24°C or even 27°C. The physics were undeniable: raising the set point allowed chillers to work less, drastically reducing the denominator in the PUE equation. By 2012, best-in-class enterprise data centers had dropped their PUEs to around 1.6 or 1.7.

Phase II: The Hyperscale Disruption (2013–2018)

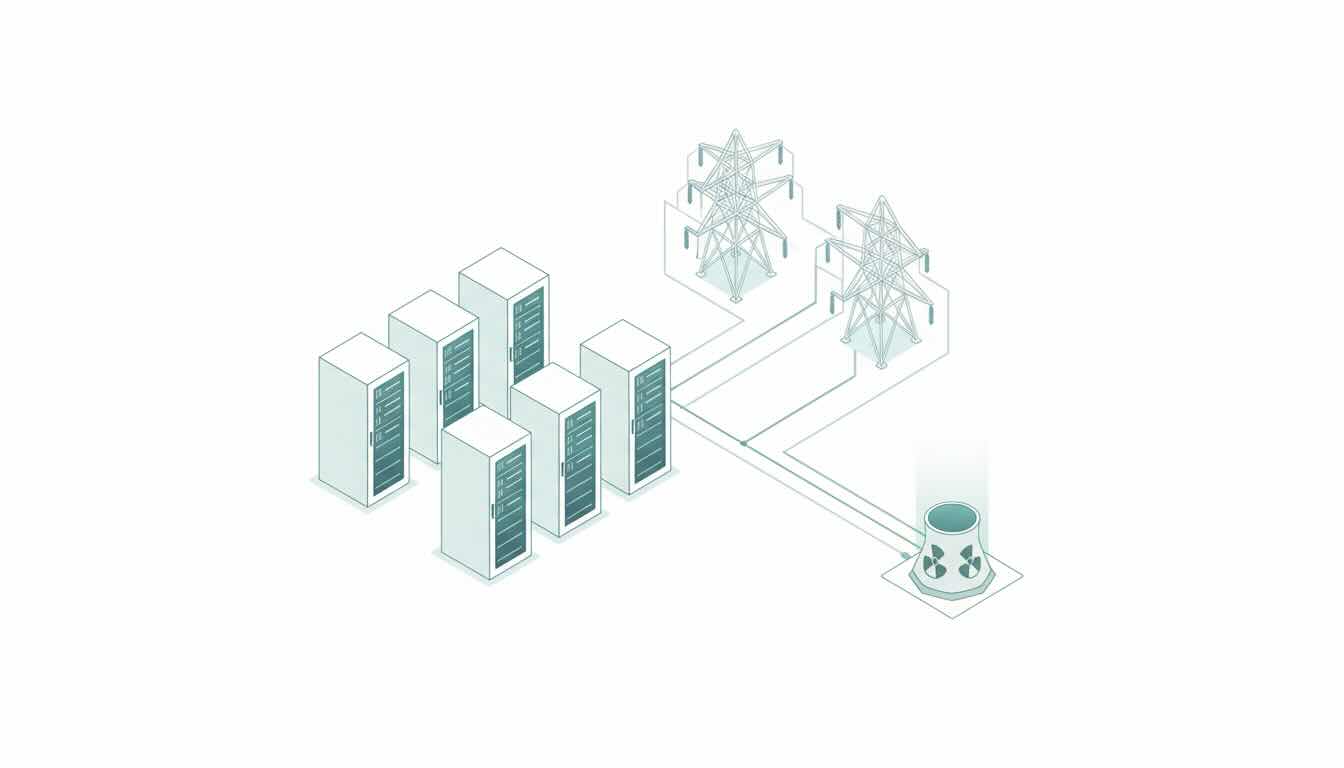

While enterprise facilities tweaked airflow, the emerging hyperscalers—Google, Facebook (Meta), and Microsoft—were reimagining the entire data center topology. They realized that to fundamentally change the efficiency curve, they had to eliminate the mechanical chiller entirely. This era was defined by free cooling (or economization). By locating data centers in cooler climates—such as the Nordics or the Pacific Northwest—facilities could use outside air to cool servers for nearly 95% of the year.

Google took this a step further by publishing their efficiency best practices, revealing that they were running custom servers stripped of unnecessary components (like video cards and excess chassis metal) to reduce heat generation at the source. They also began utilizing evaporative cooling towers and nearby water sources (like seawater or industrial canals) to reject heat. During this period, Google reported PUEs as low as 1.12 across their fleet. This proved that the most effective way to improve data center PUE was not to build better air conditioners, but to design facilities that worked in harmony with the local environment.

Architectural Shifts: How Hyperscalers Moved to Improve Data Center PUE

The pursuit of lower PUEs drove a divergence in hardware design. The Open Compute Project (OCP), launched by Facebook in 2011, open-sourced data center hardware designs. OCP racks were designed with a centralized power shelf, converting AC to DC power at the rack level rather than at every individual server. This reduced power conversion losses—a hidden vampire load that inflated PUE figures. Traditional data centers wasted 5-10% of power just transforming voltage steps between the utility feed and the CPU. OCP designs reduced this to near zero.

Furthermore, this era saw the introduction of AI-driven infrastructure management. In 2016, DeepMind (a Google subsidiary) applied machine learning algorithms to Google’s data center cooling controls. The AI analyzed historical sensor data—temperatures, power, pump speeds, and setpoints—to predict thermal loads and adjust cooling systems in real-time. The result was a 40% reduction in the energy used for cooling, proving that software could optimize hardware better than any human operator.

Phase III: The High-Density Heat Wall (2019–Present)

As the timeline approaches the present day, the industry faces a new thermodynamic adversary: Artificial Intelligence. The rise of generative AI and Large Language Models (LLMs) has necessitated the deployment of high-performance GPU clusters. These racks are no longer drawing 5-10kW; they are pushing 50kW to 100kW per rack. Air, no matter how well-contained or chilled, lacks the specific heat capacity to transport this volume of thermal energy away from the chips efficiently.

This density shift has forced a return to a technology once considered niche: liquid cooling. We are witnessing a bifurcation in the market. Legacy enterprise data centers struggle to improve data center PUE below 1.5 due to trapped infrastructure, while modern AI factories are designed for Direct-to-Chip (DTC) or immersion cooling. In immersion cooling, servers are submerged in a dielectric fluid that conducts heat but not electricity. This eliminates fans (which consume 10-15% of server power) and allows for coolant temperatures as high as 45°C. Since the coolant is so warm, the heat can be rejected via dry coolers rather than energy-intensive chillers, driving PUEs toward the theoretical limit of 1.02 to 1.05.

The Hierarchy of Cooling

Efficiency deltas across modern thermal management strategies

The Future: Energy Reuse and the Death of PUE?

Paradoxically, the final stage in the history of PUE might be its obsolescence. While PUE measures the efficiency of the facility, it does not account for the value of the waste heat. Modern European facilities, particularly in Stockholm and Helsinki, are integrating into district heating systems. They pump their waste heat into the city grid to warm homes. When this occurs, the metric shifts to ERE (Energy Reuse Effectiveness). In some calculations, if a data center exports more useful heat than the energy overhead it consumes, it can theoretically achieve a “perfect” or even net-positive efficiency rating.

The journey to improve data center PUE has evolved from a facilities management problem to a fundamental component of global energy strategy. What began as an effort to lower the electric bill has transformed into a high-stakes engineering discipline involving fluid dynamics, machine learning, and urban planning. As we look toward a future dominated by the crushing computational weight of AI, the lessons of the past two decades—containment, economization, and integration—remain the bedrock upon which the next generation of digital infrastructure will be built.

The history of PUE is not just a story of dropping numbers; it is the story of the data center maturing from an energy vampire into a responsible grid citizen. The era of the 2.5 PUE is dead, killed by innovation. The challenge now is to maintain the gains of the past while accommodating the exponential thermal density of the future.